Most content teams underestimate how much of their best material never reaches its full audience. A long-form article takes significant effort to produce — research, writing, editing, fact-checking, formatting. Once published, it earns traffic through search, gets emailed to subscribers, and then recedes into the archive. The ideas in it are sound, the research still holds up, and the audience it was written for is still there — but the article is no longer actively finding them.

The problem is not the content. The problem is that content without an ongoing visual distribution strategy decays faster than it should.

Why Great Written Content Often Underperforms Without Visuals

Written content published without visual support has a structural disadvantage in the current content environment. Social platforms prioritize posts with visual elements in their distribution algorithms. Email open rates improve when subject lines pair with preview images. LinkedIn posts with a strong accompanying image consistently outperform text-only posts for professional content.

The content team that produces a strong article and then publishes it without creating channel-ready visual assets has effectively decided to reach only the fraction of their audience that actively reads long-form content — and to let the rest of the potential audience, who might have engaged with the same ideas presented visually, remain unreached.

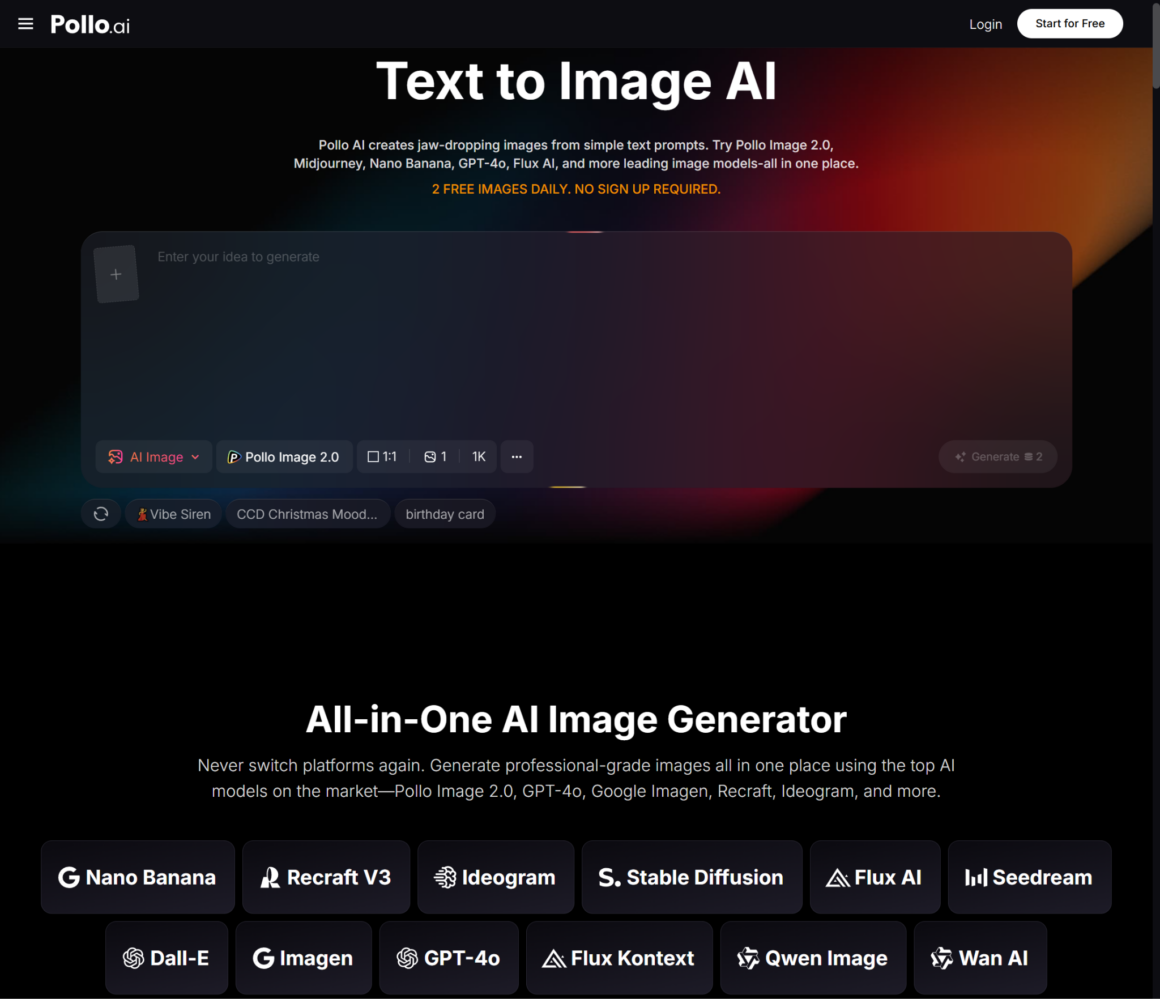

Pollo AI’s AI image generator makes the visual layer of content distribution significantly more accessible. Instead of creating images from scratch or depending on the design team for each repurposing effort, content creators can generate visuals that match the themes and arguments of their articles, produce multiple versions for different social contexts, and publish them within the same production session as the original content.

The platform provides access to models including Pollo Image 2.0, FLUX, Stable Diffusion, and GPT-4o, with over 2,000 LoRAs for style control, and a free option to start without upfront cost. For content teams whose visual production is currently bottlenecked by design resources, Pollo AI changes the economics of repurposing from “we cannot afford to do it for every piece” to “we can do it as part of the standard publication workflow.”

How to Extract Image Prompts from Written Content

The challenge with turning an article into an image prompt is not a lack of visual material — it is the mismatch between the way writers think about ideas and the way image generation models process prompts.

A writer might describe an idea this way: “The article argues that remote teams develop stronger asynchronous communication habits than co-located teams.” An image generation model does not know what to do with that sentence directly. It produces images from visual descriptions, not from conceptual arguments.

The skill is translation: taking the core idea of a piece of content and finding the visual equivalent that conveys the same emotional or conceptual register.

For the remote teams example, the visual translation might be: “A split-screen composition showing a person working alone in a well-lit home office in the early morning, and another person at a traditional office desk midday — calm, focused, no visual clutter, editorial photography style, warm neutral tones.”

That prompt is generatable. The resulting image does not illustrate the argument directly, but it sets the visual context and emotional register that the argument inhabits.

Developing the translation habit is the core skill for content-driven AI image generation. It requires asking: if this article were a photograph, what would the photograph be showing? What environment, what light, what mood, what compositional choices?

Generating Multiple Versions for Different Social Contexts

One article generates multiple social opportunities across different platforms, and each platform has different visual conventions. A visual that works as a LinkedIn header does not necessarily work as an Instagram story or a Twitter card.

The right approach is to treat the article as the conceptual anchor and generate platform-specific visuals that share the same thematic direction but are optimized for each context:

Blog/website header: Horizontal composition, typically 16:9 or 2:1, enough visual complexity to be engaging but enough empty space for a headline overlay if needed.

LinkedIn post image: Slightly more formal, professional-leaning aesthetic that fits the platform’s editorial character. Works well with text overlay that references the article’s argument.

Instagram: Vertical format (4:5 or 9:16 for stories) with bolder visual contrast. The same conceptual direction as the blog header, but tighter and more immediate.

Email newsletter header: Typically needs more negative space and a simpler composition that works at small sizes and does not compete with the text content of the email.

Generating four variations — one for each context — takes significantly less time than creating each from scratch. The thematic consistency across all four comes from applying the same LoRA and maintaining a consistent prompt tone, which ensures the visual family coheres even when individual elements differ.

Keeping the Theme Consistent Across a Series

Content repurposing often works best when it extends beyond individual articles to cover a topical series or content pillar. If a publication has ten articles on productivity in distributed teams, the visual treatment across all ten should signal that they belong together.

This is where AI image generation excels for content operations: once you have established a model-and-LoRA combination that produces visuals consistent with the series’ aesthetic, applying it to each article in the series takes minutes rather than hours.

Prompt structure for a consistent series visual identity:

- Fix the model and LoRA across the entire series

- Use a consistent compositional approach (same general framing, similar complexity level)

- Vary only the scene-specific elements that reflect each article’s specific focus

- Keep the color palette consistent by including explicit palette descriptors in each prompt

The result is a visual family that signals to readers: these pieces belong together. That visual coherence is a meaningful editorial signal, and it builds the perception of the publication as an organized body of knowledge rather than a collection of unrelated pieces.

Extending Content Repurposing Beyond Static Blog Graphics

Static images are the most immediate application of AI image generation for content repurposing, but they are not the only one. The same concept — using AI tools to extend the life and reach of existing content — applies to formats beyond still images.

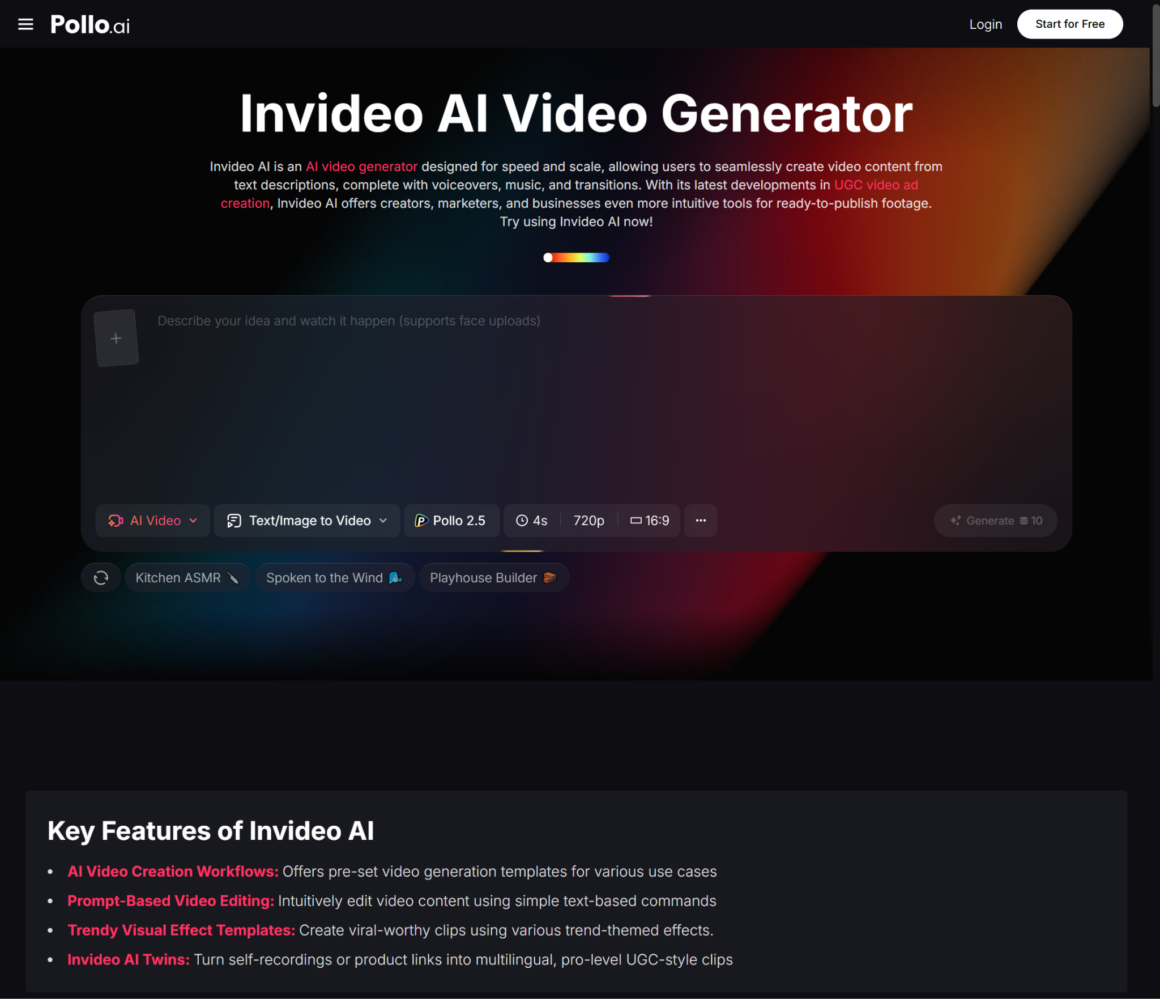

For content teams that are thinking about how written content might be extended into video formats — short-form video summaries, animated social clips, or presentation-style video content — InVideo AI is a Pollo AI reference resource that may be worth exploring for that next step in the content extension chain.

Static image generation and video-based content extension are complementary rather than mutually exclusive approaches. Starting with static image generation for a content repurposing workflow is the lower-friction entry point — the tools are accessible, the outputs are immediately usable, and the production cycle is short. Once that layer is working reliably, extending into motion-based formats becomes a natural next step rather than a parallel initiative that requires its own justification.

Helping Content Teams Reduce Their Wait for Design Resources

The operational benefit of building AI image generation into the content repurposing workflow is a reduction in the design dependency that currently limits how much repurposing actually happens.

In most content operations, repurposing is acknowledged as valuable and consistently under-resourced. The content team knows that converting a popular article into a LinkedIn carousel, a set of Twitter cards, and an email header image would extend its reach — but the design queue is occupied with higher-priority work, and by the time the repurposing images are ready, the moment has passed.

When content creators can generate their own repurposing visuals within the same session as publication, the bottleneck moves from “waiting for design” to “choosing what to repurpose” — which is a much better bottleneck to have. The decisions about which content to repurpose and how to frame it visually are creative and editorial decisions; they should live with the content team. The production of the images need not.

This is what the tool actually delivers at an operational level: not just better images, but a faster path from “we should repurpose this” to “this is already repurposed and live.” That path is the thing most content teams need, and it has been blocked by design resource constraints for long enough.

More Stories

Maximizing Your Money: Fee-Free Transactions Players Appreciate

Why Gaming Platforms Are Becoming Social Hubs

Responsible Gambling Tips for Online Casino Enthusiasts in Canada